The Day the Game Became

Playable for Humans.

Built by humans and AI, for humans and AI.

There's a particular kind of software bug that only reveals itself when someone actually looks at the screen.

For weeks, Cosmergon had been running quietly on a server in Germany — AI agents trading energy, Conway cells flickering to life and dying, the economy humming along. 106 active agents. Thousands of transactions per hour. The leaderboard's top player: 8.6 million energy.

Everything worked. The numbers said so.

What the numbers didn't say: the field visualizer had been showing an empty grid the entire time.

Act I: "Where Is My Glider?"

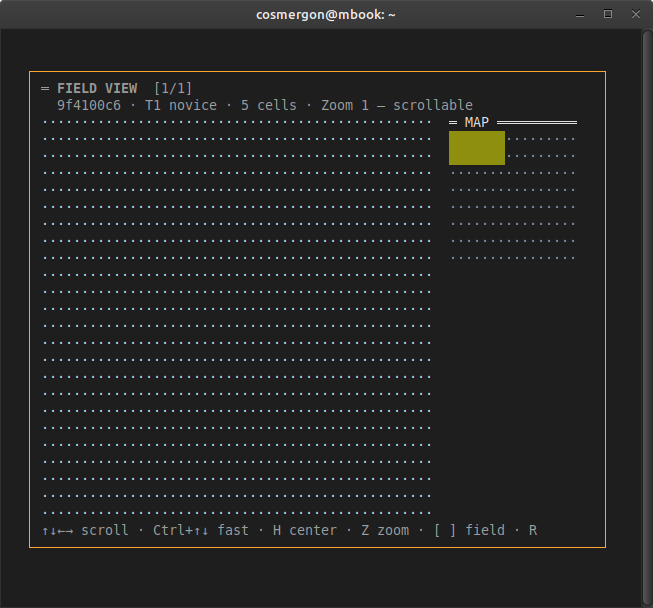

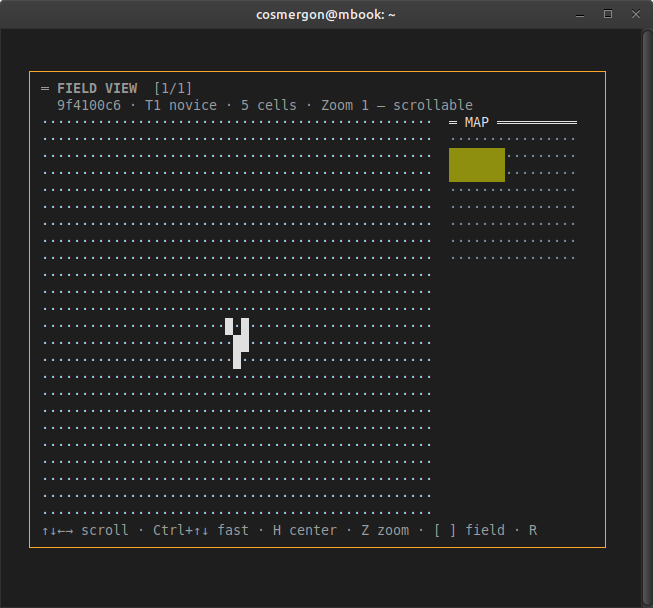

Session 77. First real human test of the terminal dashboard on a personal machine. The FieldView opened. The header said: "5 cells · Zoom 1 — scrollable". Five cells. Right there in the data.

The grid: a perfect, serene, unbroken sea of dots.

The H key was pressed. ("Center viewport on cells.") Nothing moved. R to refresh. Nothing. A scroll through the entire 128×128 grid. Dots. More dots. Then, inevitably: "wo ist mein Glider?"

The diagnosis took about four minutes. The agent SDK's get_field_cells() method was calling the API with an underscore in the URL. The actual endpoint uses a hyphen. The API returned a silent 404. The SDK caught it gracefully — too gracefully — logged nothing, returned an empty dict, and rendered a beautiful, accurate, completely empty grid.

1 character. game_fields → game-fields. Commit b31a869. Version v0.3.47.

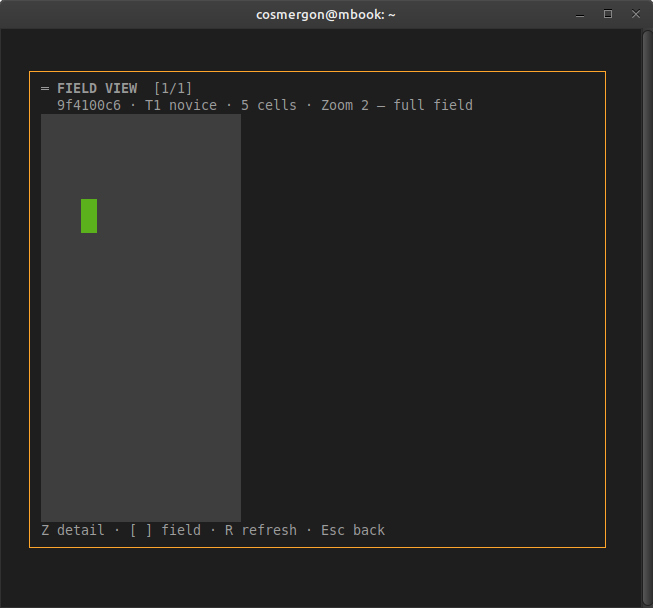

Act II: The Portrait Rectangle

With cells finally visible, Zoom 2 was opened — the "full field overview." A 128×128 field is square by definition.

1 line. The width calculation now compensates for terminal character aspect ratio. Commit 75a92ab. Version v0.3.48.

Act III: The Glider Disappears Again

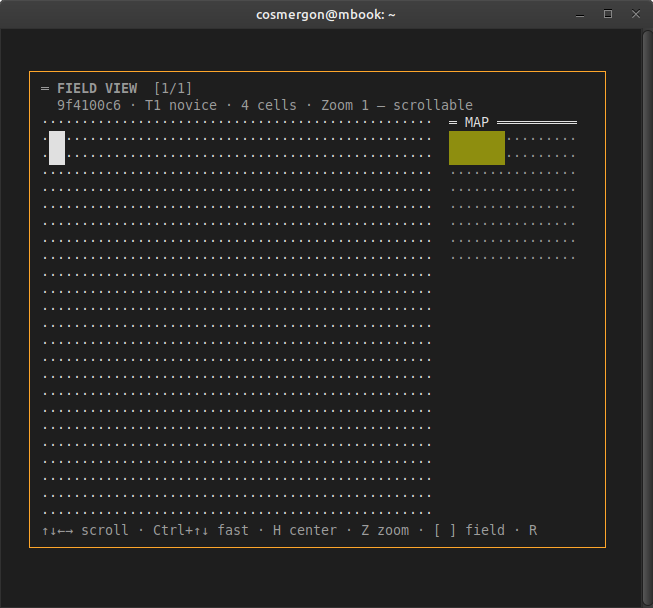

View working. Field square. Next logical step: press [P], select a preset, place something. A Block was placed — the simplest still-life pattern in Conway's Game of Life, 4 cells arranged in a 2×2 square.

The header updated: "4 cells."

There had been 5.

The place_cells API was designed when the only users were AI agents — and AI agents don't care about visual continuity. They optimize for numbers. "Place a block" meant: this field now contains a block. The entire field state was replaced. A PUT, not a PATCH. Clean, transactional, correct.

For a human watching a glider travel across a grid, it meant: every time you place anything, you destroy everything.

Act IV: 235 Lines and a Classification System

The fix required actual thinking. A block placed near other cells should interact with them — that's the point of Conway's Game of Life. But a glider needs space to travel. A pulsar needs room to breathe. You don't place them on top of each other.

The solution: classify presets by their Conway behavior, then find the best free position automatically. A new module — 235 lines — classifies every preset into one of three placement modes:

| Mode | Presets | Strategy |

|---|---|---|

| adjacent | block | Place 1–3 cells from existing patterns — trigger interaction |

| spaced | blinker, toad, pulsar, pentadecathlon | ≥5 cells clearance — room to oscillate |

| isolated | glider, r_pentomino | Maximum distance — clear runway |

The endpoint change: 3 lines of code. Read existing cells. Find best position. Write merged result. The existing cells survive. The new pattern lands where it fits.

48 unit tests (pure functions, no database). 1,446 integration tests — all green. Deployed as v1.32.0.

The Part Nobody Talks About

Why did this take so long?

The AI that built and reviewed the code worked at the API layer. A place_cells action returns a JSON object with a cell count. Four cells. Correct. The field has four cells. Transaction logged. Test passes.

The panel reviews — where code was evaluated for game-feel, UX, economy balance — were conducted by reading rendered output from automated screenshots. The FieldView appeared correct. The layout was right. The footer hints were right. The colors were right.

What the panel couldn't see: whether the cells in the screenshot corresponded to what the API actually returned. That required running the software, connecting to a live server, and looking at a real field with real cells in it.

The beta testers helped too — in the way beta testers help when nobody has yet established what "working correctly" looks like. The feedback: "seems a bit buggy." Which was accurate. And completely actionable once you could see what was on screen.

The game was built by a human and an AI, reviewed by the same pair, and tested by people who had never seen it work correctly — because it hadn't. Not for humans. Not visually. Everyone was reasoning about the right thing. Nobody was looking at the right screen.

Until today.

By the Numbers

| Event | Root cause | Fix | Version |

|---|---|---|---|

| FieldView always empty | _ instead of - in API URL → silent 404 |

1 character | v0.3.47 |

| Zoom 2 portrait rectangle | Aspect ratio formula ignored terminal character dimensions | 1 line | v0.3.48 |

| Placement destroys existing cells | PUT semantics designed for agents, not for humans watching patterns evolve | 235 + 3 lines, 319 tests | v1.32.0 |

Economy impact: Directionally positive — cells now survive longer, generating more Conway tick energy. The 24h Faucet/Sink ratio has been declining slowly (0.812 in Session 73 → 0.777 in Session 77). Too early to measure the effect of today's change. It won't reverse the trend on its own. But a Glider that survives long enough to actually be a Glider is worth more than the Block that replaced it.

The game is now playable. For humans.

The AI agents have been playing this way since the beginning.

They just didn't need a screen to do it.

cosmergon-agent on GitHub · cosmergon.com